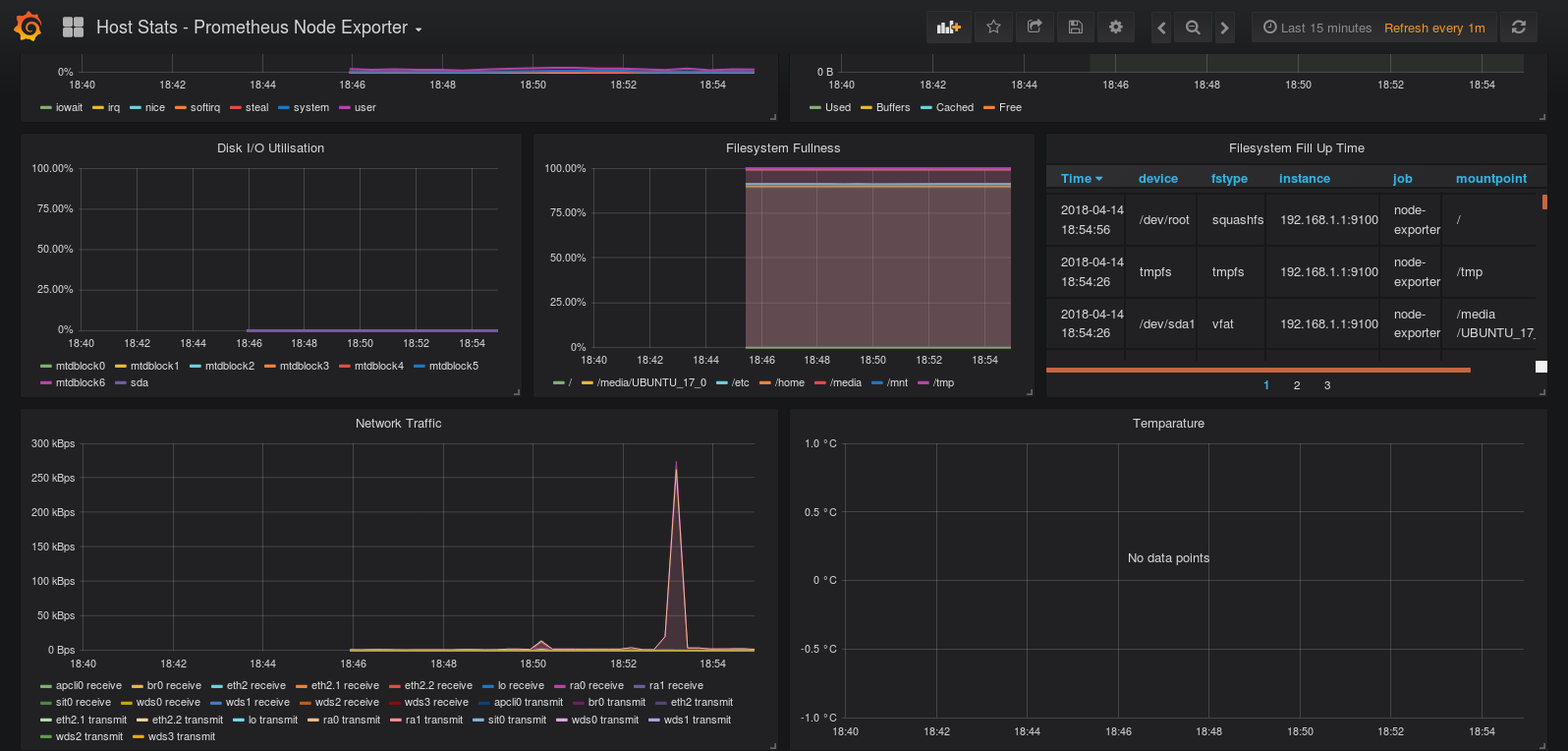

And if we wanted to see the cache and buffer data itself, we would use node_memory_Cached_bytes and node_memory_Buffers_bytes, respectively. To see the amount of available memory, including caches and buffers that can be opened up, we would use node_memory_MemAvailable_bytes. Here, node_memory_MemFree_bytes denotes the amount of free memory left on the system, not including caches and buffers that can be cleared. While on its own this is not the most helpful number, it helps us calculate the amount of in-use memory: node_memory_MemTotal_bytes - node_memory_MemFree_bytes Node_memory_MemTotal_bytes provides us with the amount of memory on the server as a whole - in other words, if we have 64 GB of memory, then this would always be 64 GB of memory, until we allocate more. The other targets are working (in the Prometheus UI) and I even got data, but not for node-exporter and kube-state-metrics. In the stack, everything get deployed correctly, but somehow, prometheus cant find node-exporter and kube-state-metrics. The metric expressions listed above provide us with what is essentially the same data as free but in a time series where we can witness trends over time or compare memory between multiple system builds. Everything works but the kube-prometheus-stack. Navigate to localhost:9090/graphin your browser and use the main expression bar at the top of the page to enter expressions. Those who do a bit of systems administration, incident response, and the like have probably used free before to check the memory of a system. Now that Prometheus is scraping metrics from a running Node Exporter instance, you can explore those metrics using the Prometheus UI (aka the expression browser). However, of the vast array of memory information we have access to, there are only a few core ones we will have to concern ourselves with much of the time: Memory metrics for Prometheus and other monitoring systems are retreived through the /proc/meminfo file in Prometheus in particular, these metrics are prefixed with node_memory in the expression editor, and quite a number of them exist. This is called an exporter in Prometheus vocabulary and will be used as a target in Prometheus configuration.

It will expose metrics thatPrometheus will be able to retrieve then. When it comes to looking at our memory metrics, there are a few core metrics we want to consider. Node Exporter is a software that you can install on NIX kernel (Linux, OpenBSD, FreeBSD or Darwin). thanks in Generated access key and tired on my browser with Now i can able to view the below.Run stress -m 1 on your server before starting this lesson. Please share some pointers to resolve this issue. $ curl -X GET Empty response for above commands. no data for node-exporter metrics through prometheus webapp All targets for node-exporter on webapp have state UP no errors here. $ curl -X GET jenkins-server:8080/jenkins/prometheus/ * Connection #0 to host jenkins-server left intact basic_auth:Ĭurl response $ curl -v jenkins-server:8080/jenkins/prometheus Just shows the blank page on my browsers(IE and Chrome). Also i have tried with domain credential password and token in my prometheus.yml but still it doesn't show the plugin generated data in my end-point. I have added below in my prometheus.yml - job_name: 'jenkins'Ĭurrently LDAP authentication and Project-based Matrix Authorization configured. My jenkins base URL - But my prometheus end-point - not showing any metrics data. My Jenkins - 2.263.1(LTS) deployed through tomcat and i have installed Prometheus metrics plugin - 2.0.8 and restarted the service.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed